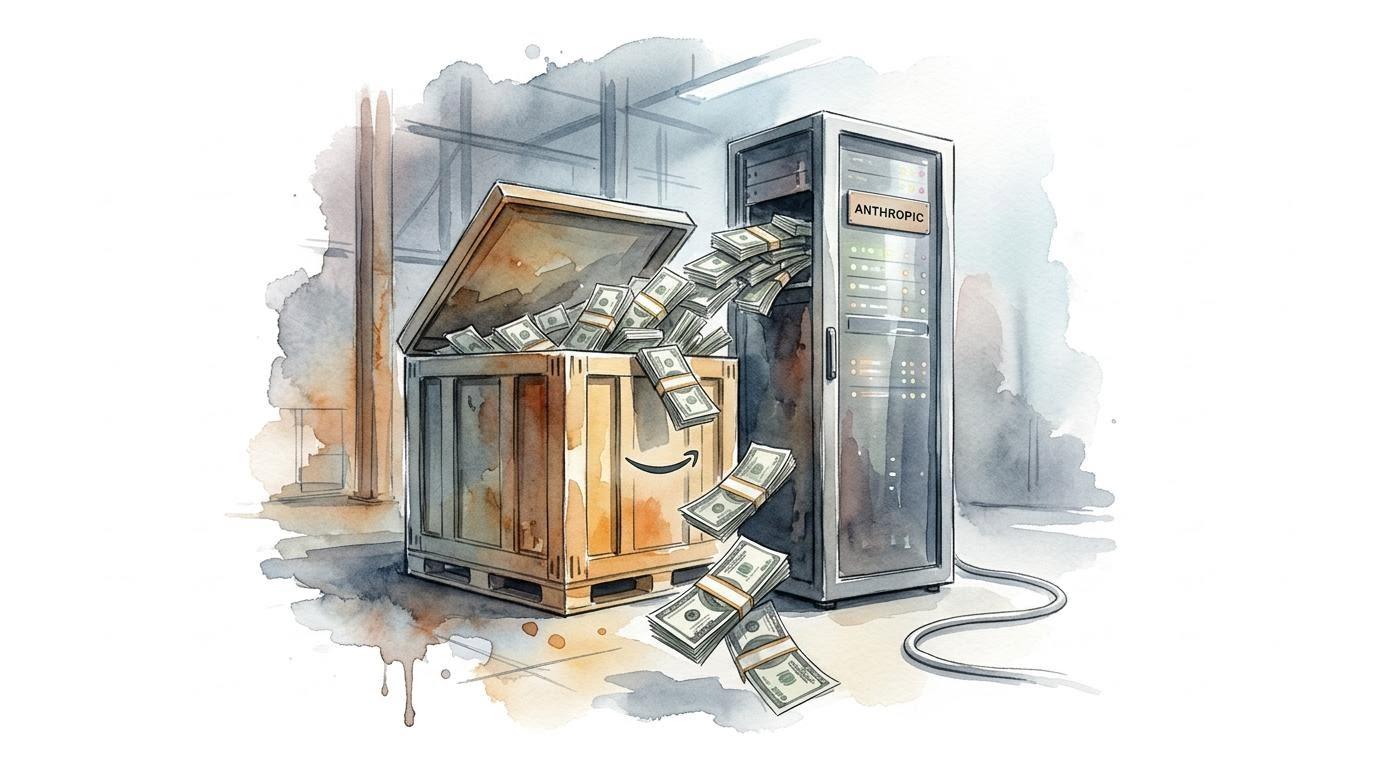

Amazon Plans Up to $25 Billion Anthropic Investment

Amazon plans to invest up to $25 billion into Anthropic as part of a broader AI infrastructure push tied to AWS. The move deepens an already tight partnership and signals Amazon’s intent to control both compute and model layers. It also raises the stakes in cloud competition with Microsoft and Google.

- Amazon’s plan would put up to $25 billion into Anthropic as part of its AI infrastructure push.

- The investment deepens the AWS partnership and links model work more closely to Amazon’s cloud stack.

- The move also intensifies cloud competition with Microsoft and Google.